The common mistake across all four platforms: treating AI as a set-and-forget button. It is not.

Every platform's AI requires different inputs, different guardrails, and different review cycles. Google needs weekly bid reviews. Meta needs creative refresh every two weeks.

LinkedIn needs audience testing monthly. TikTok needs new hooks constantly.

Learn each platform's AI rhythm. That is how you stay in control.

The Part AI Cannot Do

AI cannot tell you whether a cheap lead is a good lead.

Victor showed me this clearly. Spain's budget had grown 37 times. Leads only increased 9 times.

CPL exploded and never recovered below 30 again. The algorithm kept spending into cold audiences while the system thought it was still in growth mode.

The AI found the pattern. It told Victor the numbers.

But it could not tell him why Spain was still worth targeting at a higher CPL. It could not say whether Latin America's cheap leads turned into paying customers.

That answer lives in the CRM. Not the ad platform.

Victor said: "If we don't have the context of the client, you don't need to just chase the cheaper cost per lead."

Two different questions. The AI answers the first one. You answer the second.

The same applies to prompt engineering for PPC. The difference between a useful AI output and a useless one is almost always the input.

Feed it your client's business context. Not just the campaign data.

How to Measure Whether AI Is Actually Working

Most agencies add AI tools and never measure whether they made a difference. They feel faster. They think results improved.

But they never track it.

I track three numbers.

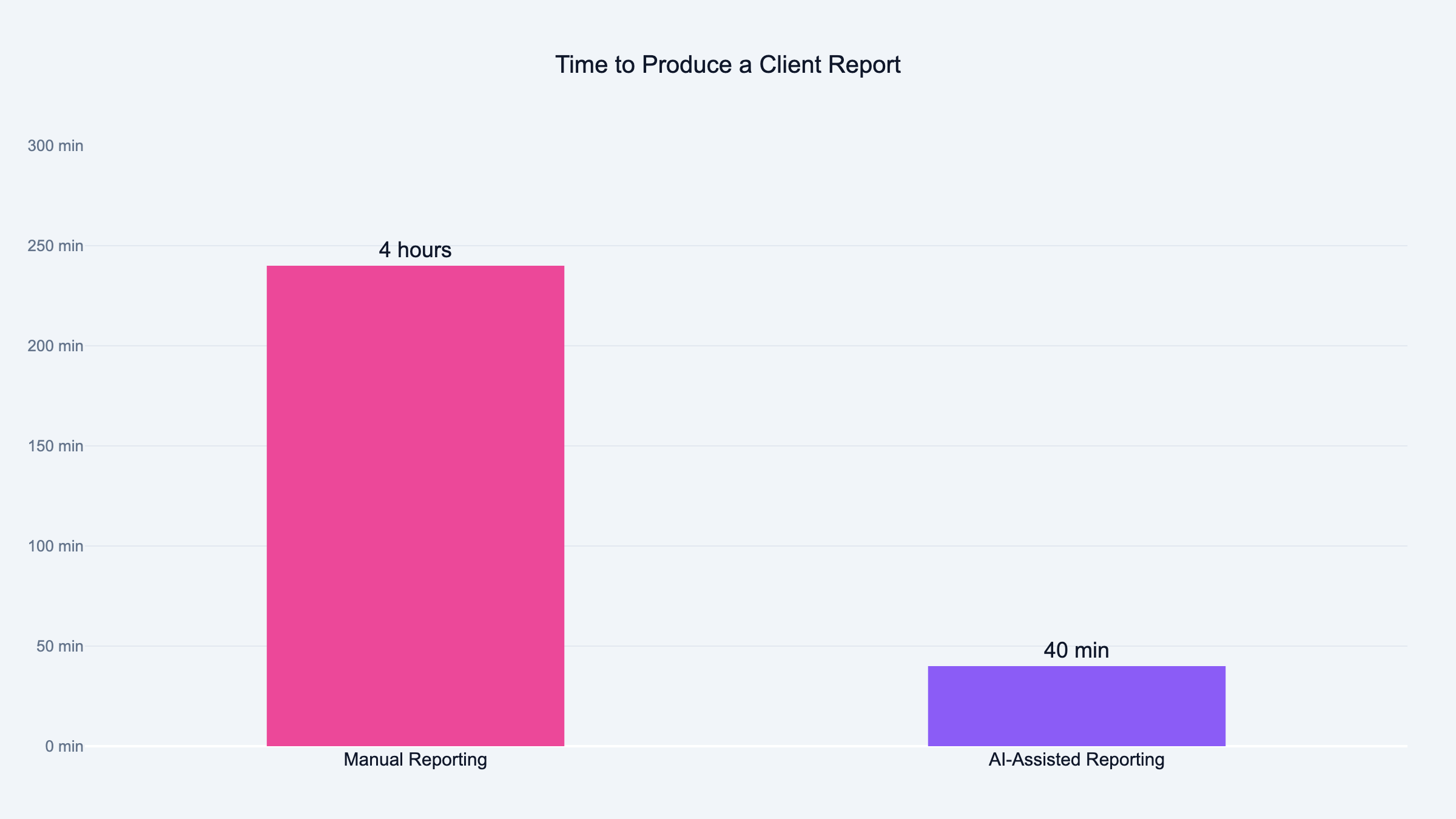

First: time per report. Before AI analysis, Victor spent two hours per client report. After: about 40 minutes. We tracked this across five client accounts over six consecutive weeks, logging start and end times for each report cycle.

That is measurable. Put it on a spreadsheet. Track it monthly.

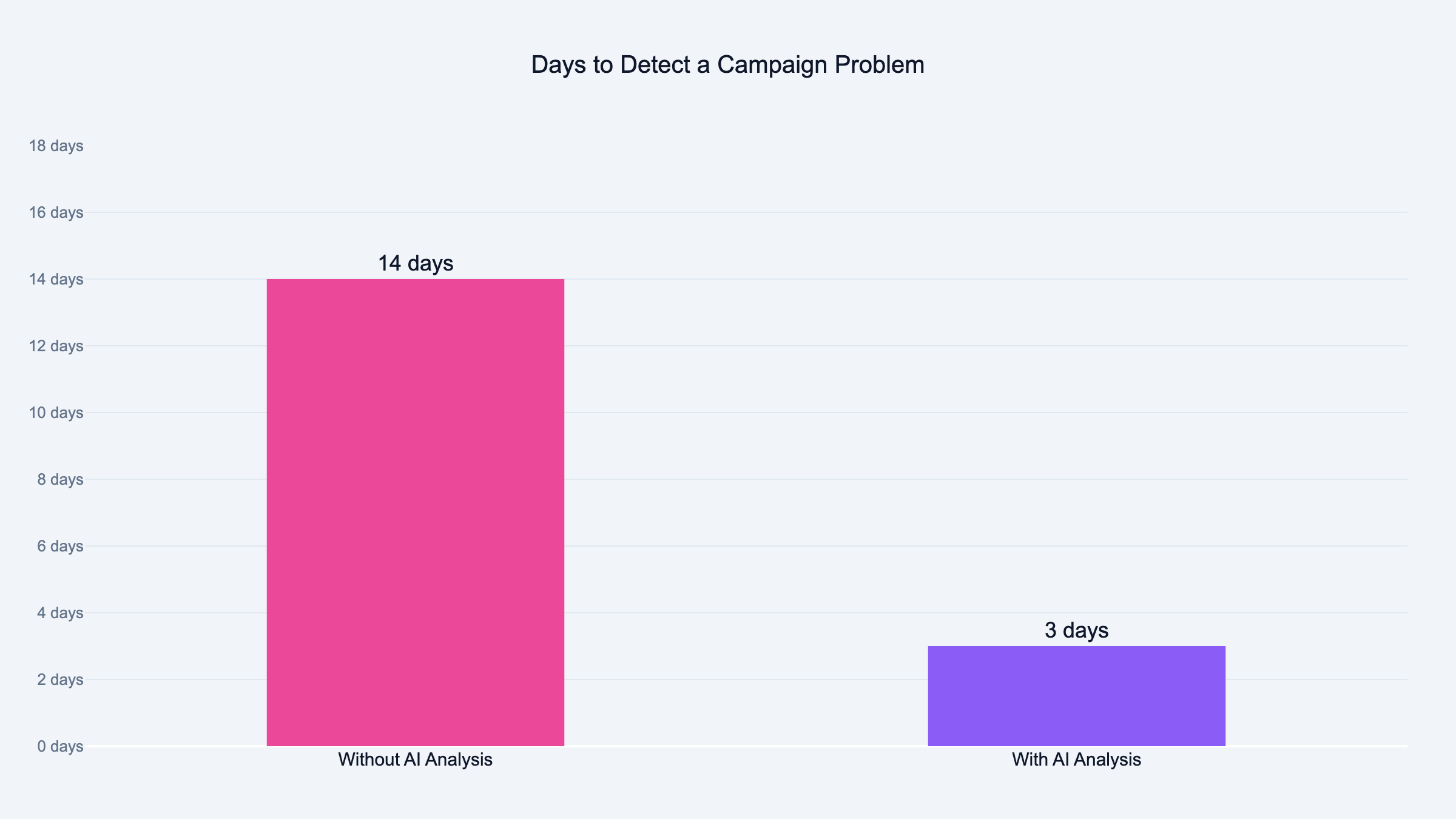

Second: time-to-insight. How many days between a problem appearing in the data and someone catching it.

Before AI, that gap was sometimes two weeks. We caught the problem when the client asked about it.

Now we catch it in one to three days. Often before the client sees the numbers. We measured time-to-insight across 4 client accounts over 2 months by logging when each issue first appeared in the data versus when we flagged it.

Third: recommendations per report. Most agency reports end with "continue to improve targeting." That is not a recommendation.

That is filler.

AI analysis makes one specific call per report. "Pause Spain. Move budget to France."

Track whether those calls were right after 30 days.

If your AI tools do not move at least one of those three numbers, they are not helping. They are just making you feel modern.

The agencies I trust do not talk about AI. They talk about what changed after they started using it.

Where to Start If You Run Paid Ads

Start with reporting. Not creative. Not bidding.

Reporting.

Reporting is the task every agency runs every week. It eats the most hours. And the output is visible to your clients.

Set up one AI-generated report for one client. Include three sections: what happened, why it happened, one recommendation.

Show the client.

Victor did this with his client Pablo. The reaction was not about AI. It was about the depth of insight.

Pablo asked when the next report was coming. That is the proof.

From there, move to creative analysis. Let AI tell you which hooks work and which creative formats scale.

Then add prompt-based copy generation for ad variations.

Save bidding and budget tools for last. They require clean conversion tracking, solid data, and enough volume for the algorithms to learn.

Automate the data layer first. Then the analysis. Then the execution.

One mistake I see agencies make: they automate everything at once. They plug in PMax, turn on Advantage+, generate all copy with ChatGPT. Then they wonder why results are worse.

The order matters.

Data first. Analysis second. Automation last.

53% of PPC managers say the job is harder than it was two years ago. 62% cite black-box platforms as their biggest frustration.

The agencies ahead of those numbers are not the ones using the most AI. They are the ones using it in the right order.

Frequently Asked Questions

Can AI actually manage Google Ads campaigns end to end?

AI can manage bidding, budget allocation, and placement decisions across Google Ads. Performance Max handles this automatically across Search, Display, YouTube, and Gmail.

What AI cannot manage is strategy. Which audiences to target. What message to lead with.

When to pull back spend on a market that is not converting. That is still your call.

How do PPC agencies use AI without losing control?

Layer your control. Let AI handle high-frequency decisions like bid adjustments and placement.

Keep human oversight on strategy, creative direction, and client communication. The agencies losing control are the ones who turned everything on and walked away.

Will AI replace PPC managers?

AI will replace the tasks that involve pulling data, formatting reports, and testing ad variations at scale. Those are already faster with AI.

AI will not replace the parts that require client context, judgment calls, and creative strategy. The role changes shape. It does not disappear.

What data should you feed AI before writing ad copy?

Campaign history. Landing page content. Past winning headlines.

Competitor ads. Your client's brand voice guidelines. The more specific you are, the better the output.

Never ask AI to write ad copy from a blank prompt.

How do you automate Google Ads reporting with AI?

Pull campaign data into a structured format. Feed it to AI with a clear prompt: what happened, why it happened, what to do next.

The full system takes about 40 minutes per client instead of 4 hours. I built this at Sucana and documented the entire process.

Is Performance Max better than manual campaigns?

Google says PMax generates 35% more conversions at 20% lower CPA. Those are averages across all advertisers, not specific to your account.

For lead gen agencies with clean conversion tracking, PMax often outperforms on efficiency. For agencies with messy data or niche audiences, manual campaigns give more control.

Can ChatGPT write Google Ads headlines that fit the 30-character limit?

Not by default. Most AI tools ignore character limits and produce headlines that are too long.

Include the character limit in your prompt. Ask the AI to confirm each headline fits before presenting it.

How is AI changing Meta Ads creative testing in 2026?

AI analyzes creative performance at a level most humans cannot match at speed. It identifies which hooks drive clicks, which body copy converts, and which formats hold CPL at scale.

The biggest shift is testing volume. What used to take weeks now takes days.

What AI tools work across both Google and Meta Ads?

Most native AI tools are platform-specific. Google has AI Max and PMax. Meta has Advantage+.

Cross-platform analysis requires a tool that pulls data from all platforms into one place. That is what we built at Sucana.

How do agencies prove value when clients think AI does everything?

Show them a report they have never seen before. Not a pitch about AI. An actual report with specific numbers, clear analysis, and one recommendation.

When clients see their own data explained at that depth, the AI question disappears.

Is AI-generated ad copy allowed by Google Ads policies?

Yes. Google does not ban AI-generated copy. The ads still need to meet all standard policies on accuracy, trademarks, and prohibited content.

The real risk is generic copy that does not convert because it lacks your client's specific context and voice.

How long does it take to see results from AI in paid media?

Reporting improvements are immediate. Your first AI report will be faster and more detailed than your manual process.

Creative testing improvements show up in 2-4 weeks. Campaign performance improvements compound over 60-90 days as patterns emerge from the data.

What is the biggest mistake agencies make when adding AI to paid media?

Automating everything at once. They turn on PMax, Advantage+, AI copy generation, and automated bidding all in the same week. Then they cannot tell what is working and what is hurting.

The better approach: add one AI layer at a time. Start with reporting. Measure the difference.

Then add creative testing. Then bidding.

How much does AI save agencies on reporting time?

In our experience, AI cuts reporting time by about 75%. What took Victor two hours per client now takes about 40 minutes. That percentage is based on timed comparisons across five client reports over six weeks.

The time saved is not the real value. The real value is what you do with that time. Victor uses it to dig deeper into the data and find problems earlier.

Do clients care whether you use AI for their campaigns?

Most clients do not care about the tool. They care about the result.

When Victor showed his AI-generated report to Pablo, the reaction was about the depth of the analysis. Not about what built it.

The agencies losing client trust are the ones explaining AI before showing results. Show the result first. Explain the tool never.