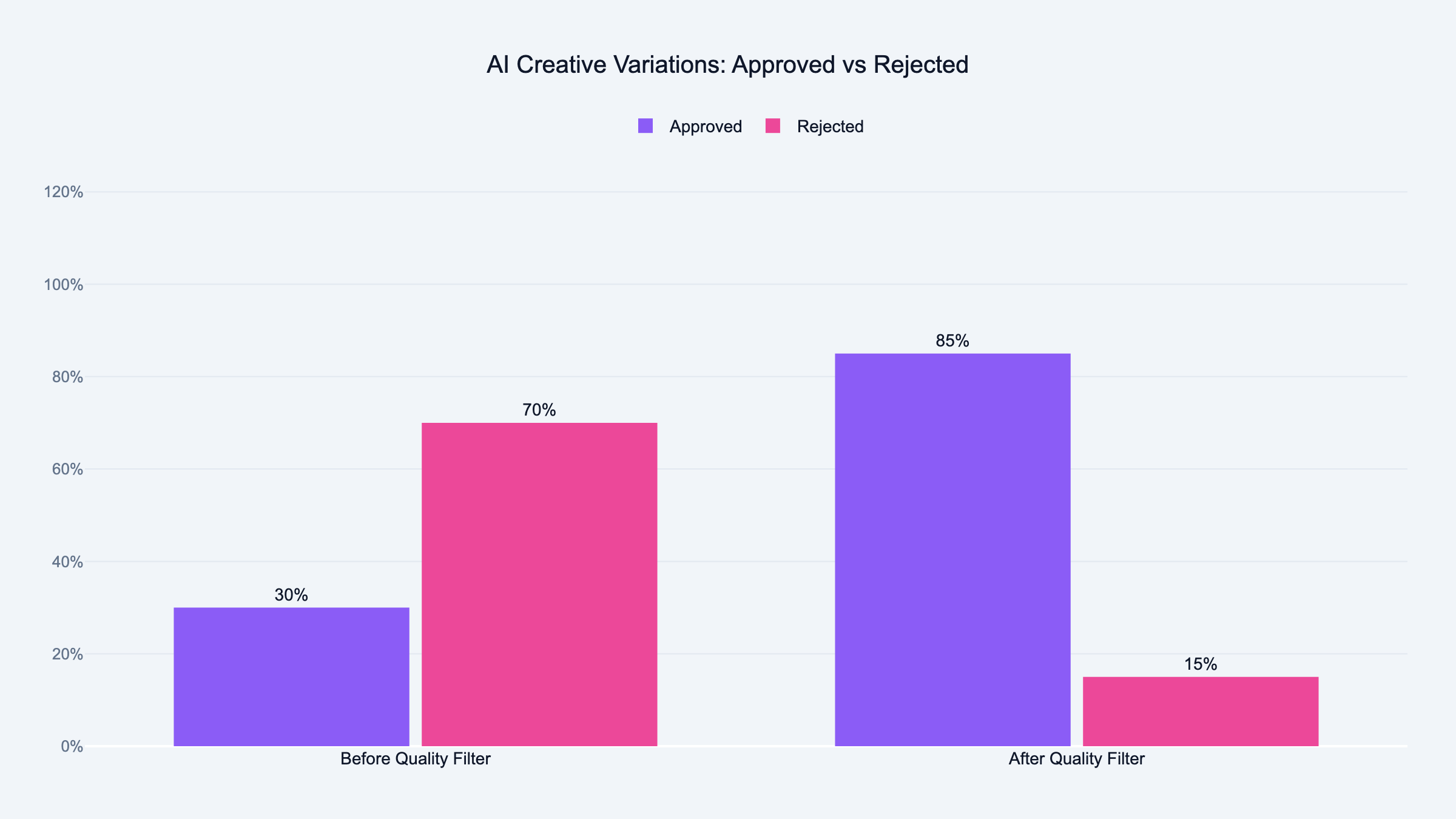

If your brand has strict visual guidelines, the AI will almost always produce something off-brand on the first pass. You can train it better over time, but early on plan for high rejection rates.

And if you are running very low budgets, less than $50 per day per ad set, the ABO structure will not give you enough data. At that scale, run one strong creative and focus on offer testing instead.

What Winning Agencies Are Doing Differently

The agencies I see getting real results from AI creative testing share one habit. They treat AI as a brainstorming partner, not a creative director.

They use AI to generate 20 ideas quickly. Then a human picks the three worth testing.

Then the test runs with human-approved creatives inside an ABO structure. Then Advantage+ gets the winner to scale.

Four stages. AI involved in one of them.

That is not anti-AI. That is using AI where it is fast and cheap, which is idea generation, and keeping humans in the decisions that cost money to get wrong.

Meta's Advantage+ will keep getting better. The filter between AI output and live campaign will always matter.

For a full review of the third-party AI tools that support this workflow, I compared them all in the best AI tools for Meta Ads in 2026. For a broader look at what's worth using across all AI features in Facebook Ads, see AI for Facebook Ads: What's Actually Worth Using.

Frequently Asked Questions

How do I test creatives on Meta in 2026?

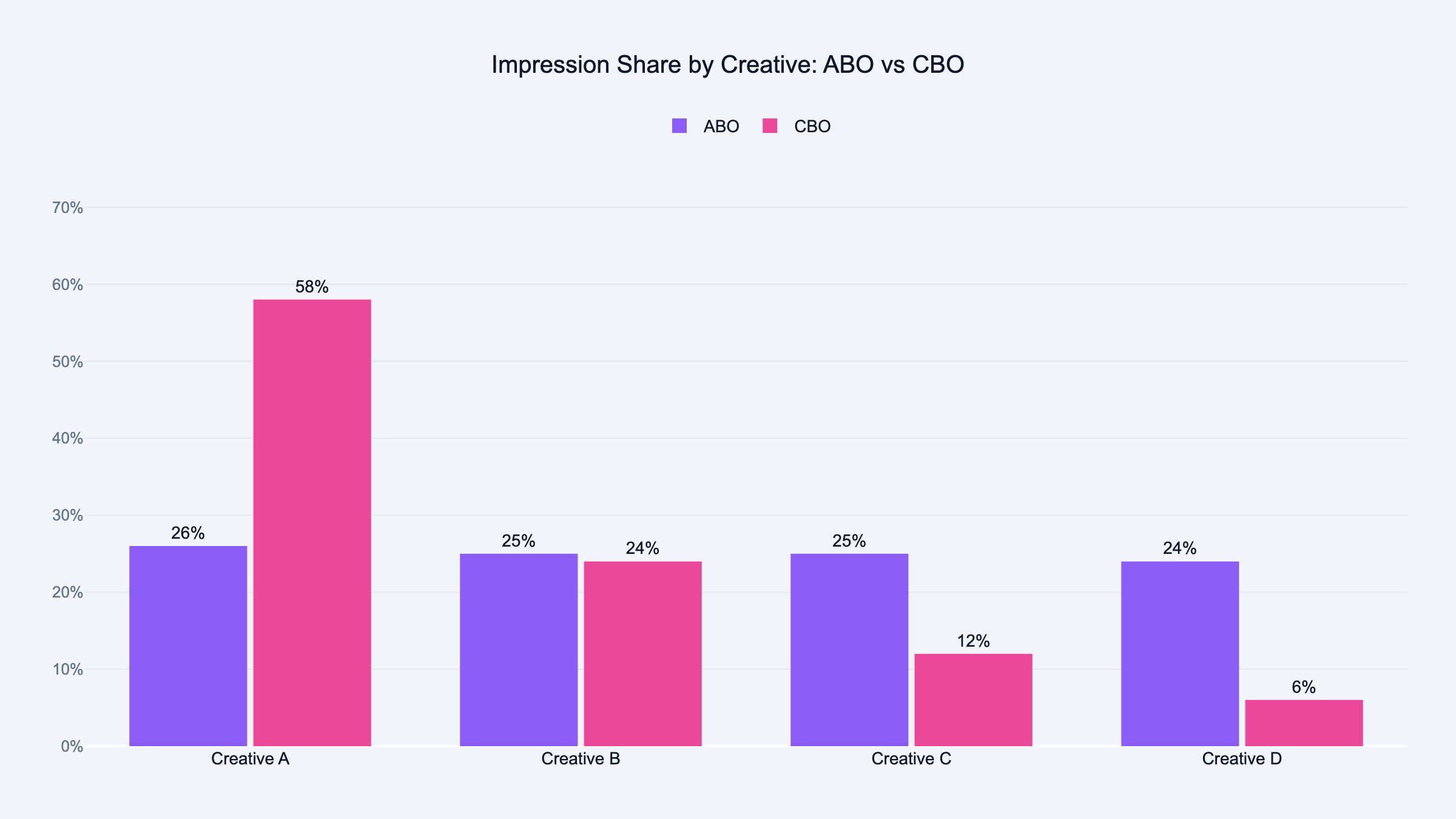

Use ABO (Ad Set Budget Optimization) for the test phase and keep each ad set to one creative variation. Run with equal budgets per ad set for at least seven days before comparing results.

Once you have a winner, scale it with Advantage+. The key change in 2026 is that Advantage+ is now the default, so manually switch to ABO before starting any creative test, or your budget consolidates before the data means anything.

What is the difference between ABO and CBO for creative testing?

ABO (Ad Set Budget Optimization) controls budget at the ad set level, so each creative variation gets equal spend. CBO (Campaign Budget Optimization) controls budget at the campaign level and pushes spend toward early winners.

For creative testing, ABO is the right choice. CBO decides too fast, before your test has enough data to mean anything.

What metrics should I use to evaluate creative performance?

Start with hook rate (percentage who watched the first 3 seconds of your video), then hold rate (percentage who watched past 15 seconds). These two tell you if the creative stops the scroll and holds attention.

Then look at CTR and CPA. Most performance problems trace back to hook and hold rate. Fix those first.

How often should I refresh creatives to avoid creative fatigue?

Check frequency every week. If your frequency is above 2.5 and CTR is dropping, the creative is fatigued. Refresh before ROAS drops, not after.

For high-spend accounts, plan a new creative batch every two to three weeks. For lower-spend accounts, monthly is usually enough.

How do I detect creative fatigue in Meta Ads?

Three signals: frequency climbing above 2, CTR dropping week over week, and CPM increasing with no targeting changes.

When you see two of these three together, the audience has seen your ad too many times. Build a weekly check into your reporting routine. Fatigue shows up in the data before it shows up in your results. If your CPL spikes alongside these signals, run through my Facebook Ads CPL troubleshooting checklist to find the root cause.

What should I A/B test in Meta Ads?

Test one variable at a time. The most impactful tests are usually the opening line for copy, the first three seconds for video, or the main image for static ads.

The mistake most agencies make is testing too many variables at once. You end up with results you cannot explain. One variable, clean test, clear answer.

Can Meta's Advantage+ Creative replace manual testing?

Not for finding winners. Advantage+ is good at scaling creatives that already work. It is not reliable for identifying which of your ideas is the best one.

Use manual testing with ABO to find the winner. Then use Advantage+ to scale it. That is the workflow.

How do I prevent off-brand outputs from AI creative tools?

Give the AI a one-page brand brief every time. Your brand voice in three sentences, the words you never use, the tone you are going for, and one example of copy you love and one you hate. I cover the full briefing system in my guide on AI prompt engineering for PPC.

AI tools improve dramatically when they have a reference point. Without it, they default to generic.

How much budget do I need to test Meta Ad creatives properly?

Each variation needs enough spend to get at least 50 clicks before you draw conclusions. At a $2 CPC, that is $100 per variation.

If you are testing four variations, budget $400 minimum for the test phase. Less than that and you are reading noise, not signal.

What is the fastest way to find a winning creative on Meta?

Generate five variations using AI. Cut any that fail the brand check.

Run the remaining two or three in ABO with equal budgets for seven days. The one with the best hook rate and lowest CPA wins.

The whole cycle from brief to result takes about ten days at a $50 per day test budget. That is fast enough to iterate twice a month.

Why is my Advantage+ Creative generating weird outputs?

Advantage+ generates variations from your uploaded assets using its own model. It does not know your brand, your tone, or what "off-brand" means for you.

The only way to control the output is to control the input. Upload only assets you have already approved.

Remove the option to generate text variations if you want to keep the copy in your hands. Review before scaling.

Is AI creative testing worth it for small agencies?

Yes, but the value is in idea generation, not automation. Use AI to write 20 headline variations in ten minutes instead of two hours. Then pick the best two yourself.

The time savings compound. An hour saved on every creative brief adds up across a full client portfolio.